Engineering

Laitinen Tero

Sep 18, 2023

Enhancing User Experiences with Advanced Image Formats

Efficient image delivery lies at the heart of successful online experiences. It affects both user engagement and search engine optimization (SEO) rankings. In early 2021, we identified a need to expand beyond our conventional JPEG encoding service and began to explore more innovative image processing solutions.

By adopting the modern WebP and AVIF formats, we aimed to improve user experience and SEO metrics. Though superior in compression, these formats were computationally more demanding, complicating their integration into our existing on-demand encoding service. To tackle this, we shifted to an ahead-of-time encoding approach, triggering batch processing for all new images. Ultimately, in order to manage the computational cost, we focused on lazily encoding only those format-size combinations that users requested.

This new approach eased server loads, sped up image delivery, and made using larger, high-quality visuals possible, elevating user experience and SEO performance.

Determining Quality Values for AVIF and WEBP

Informed by a comparison of WebP and AVIF file sizes at the same similarity metric, we aimed for a median size reduction of 20% for WebP and 40% for AVIF compared to JPEG, roughly matching the article’s 85th percentile results.

After experimenting with a set of sample images, we found that AVIF (q=58, speed=1) and WebP (q=82) could match the JPEG (q=85) quality without any noticeable loss. We validated these findings using a simple web application to compare formats and evaluate quality degradation.

Adding WebP and AVIF to On-Demand Image Encoding Service

We incorporated WebP and AVIF into “lens”, our existing Rust-based image proxy service by using the ravif and webp packages. For AVIF, we opted for the speed setting 6, ensuring an image encoded in under a second. The speed setting 5 took up to 3-4 seconds – unacceptable for on-demand encoding.

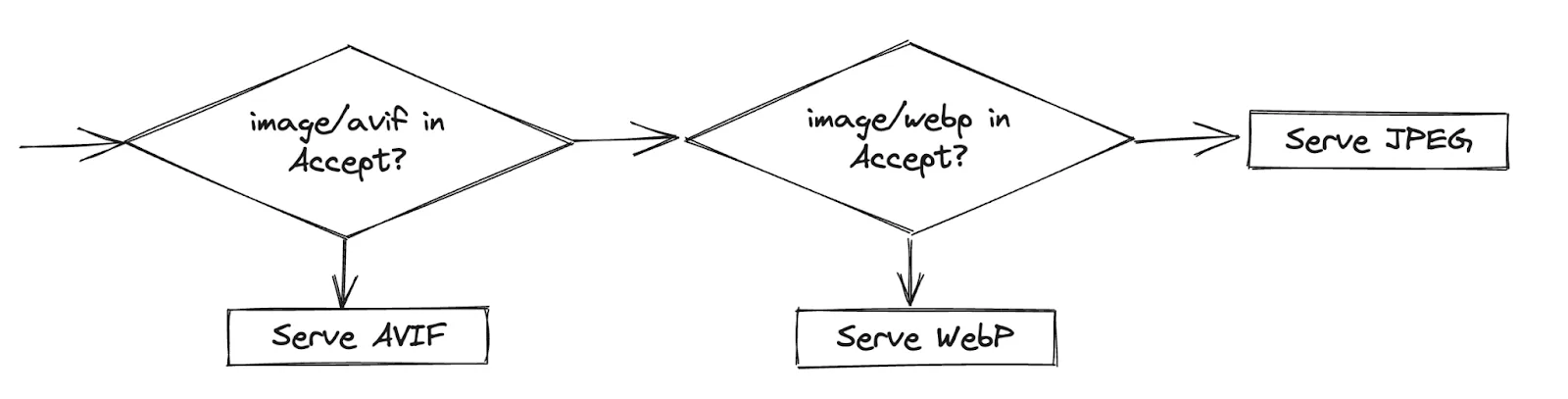

To serve the most advanced format supported by the client (browser or mobile application), we leveraged HTTP content negotiation, where the client specifies its preferred formats in the “Accept” header. We modified CloudFront's configuration to forward the “Accept” header to our servers. Additionally, we configured our server to return a "Vary: Accept" response, ensuring CloudFront correctly cached and served images based on each request's “Accept” header.

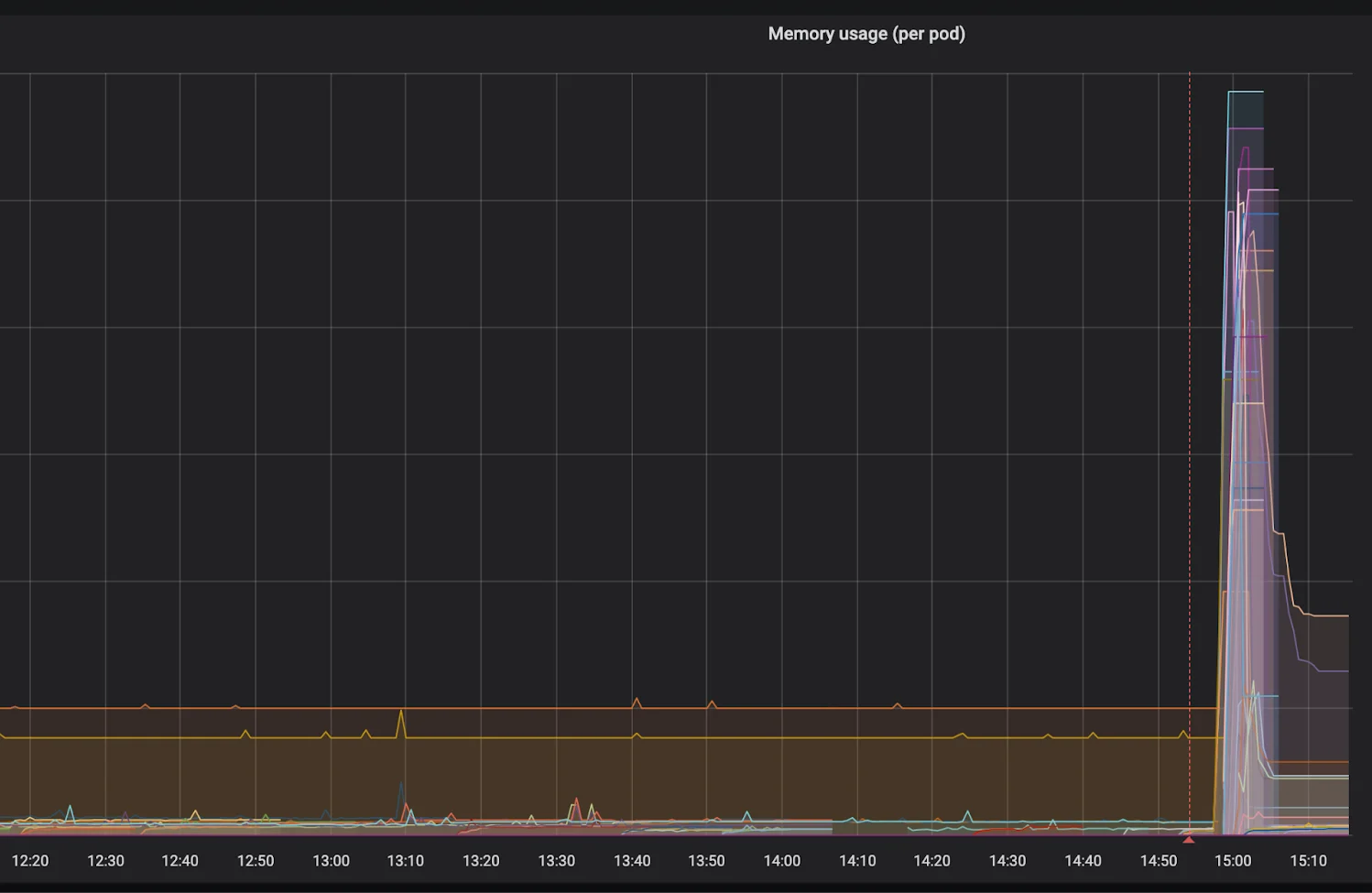

Deployment proved challenging. We overlooked the impact of a 98%+ CloudFront cache hit rate and a cold cache on our servers. The heavier AVIF and WebP encoding overloaded “lens”, while the HPA couldn't spawn pods quickly enough. The simultaneous handling of numerous requests also led to a significant memory spike and a swift rollback. After the short incident, we estimated that the maximum number of replicas fell far short of what would have been necessary.

Ahead-of-Time Encoding using Node.js

Given the challenges faced with the "lens" service, it was clear that a shift to ahead-of-time encoding was necessary. While Rust provided excellent performance, our decision to use Node.js was influenced by the prevalent use of TypeScript across multiple teams, the reduced emphasis on Rust maintenance, and the availability of Sharp, a high-performance image processing library.

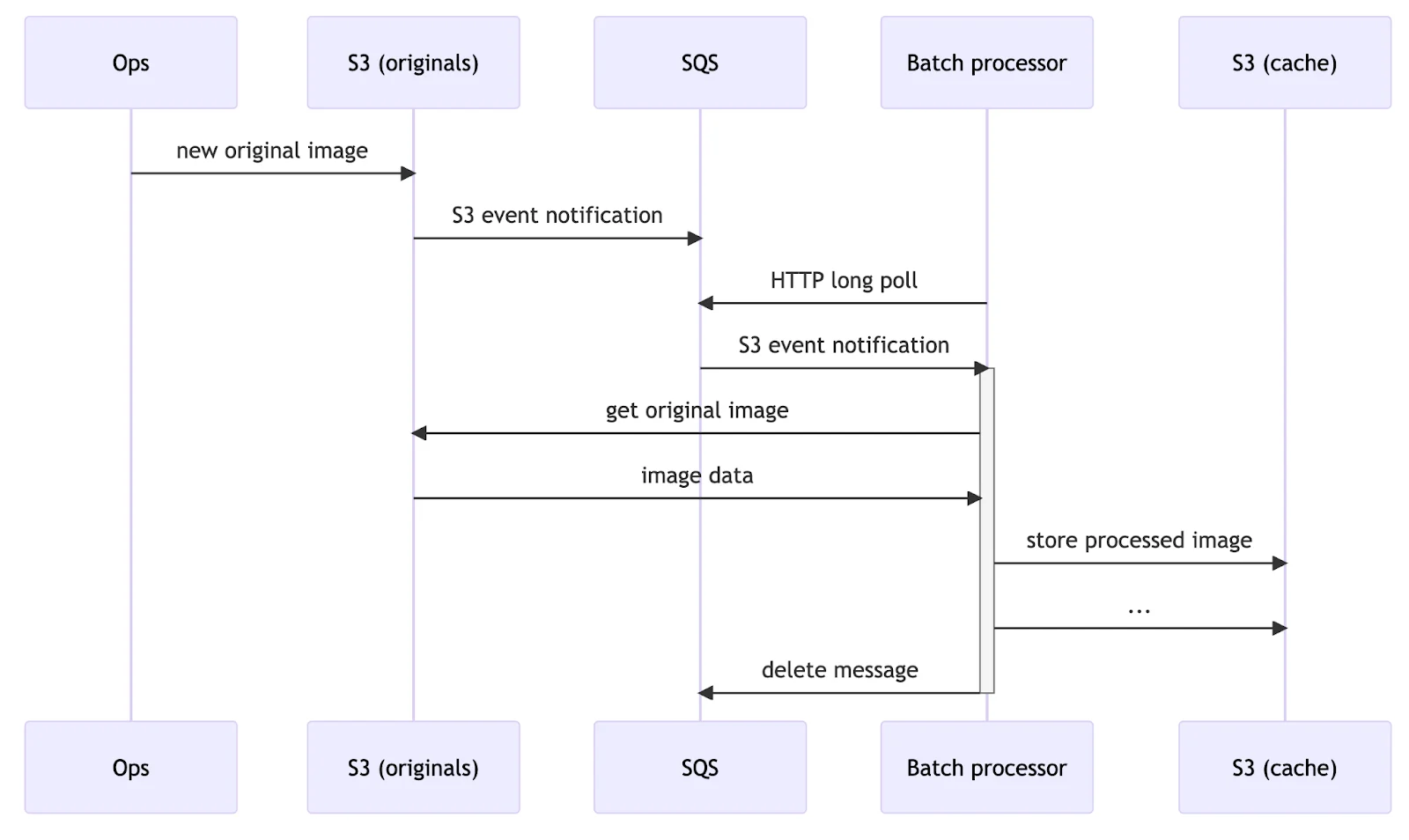

The new service, “image-resizer”, has two deployments: one for batch processing and another for proxying. Original images are added into multiple S3 buckets, triggering S3 event notifications. The batch processor receives these notifications through an SQS queue, encodes images into predefined sizes and formats, and stores them in the service’s S3 bucket. The proxy's primary role is to serve pre-processed images from the service’s S3 bucket.

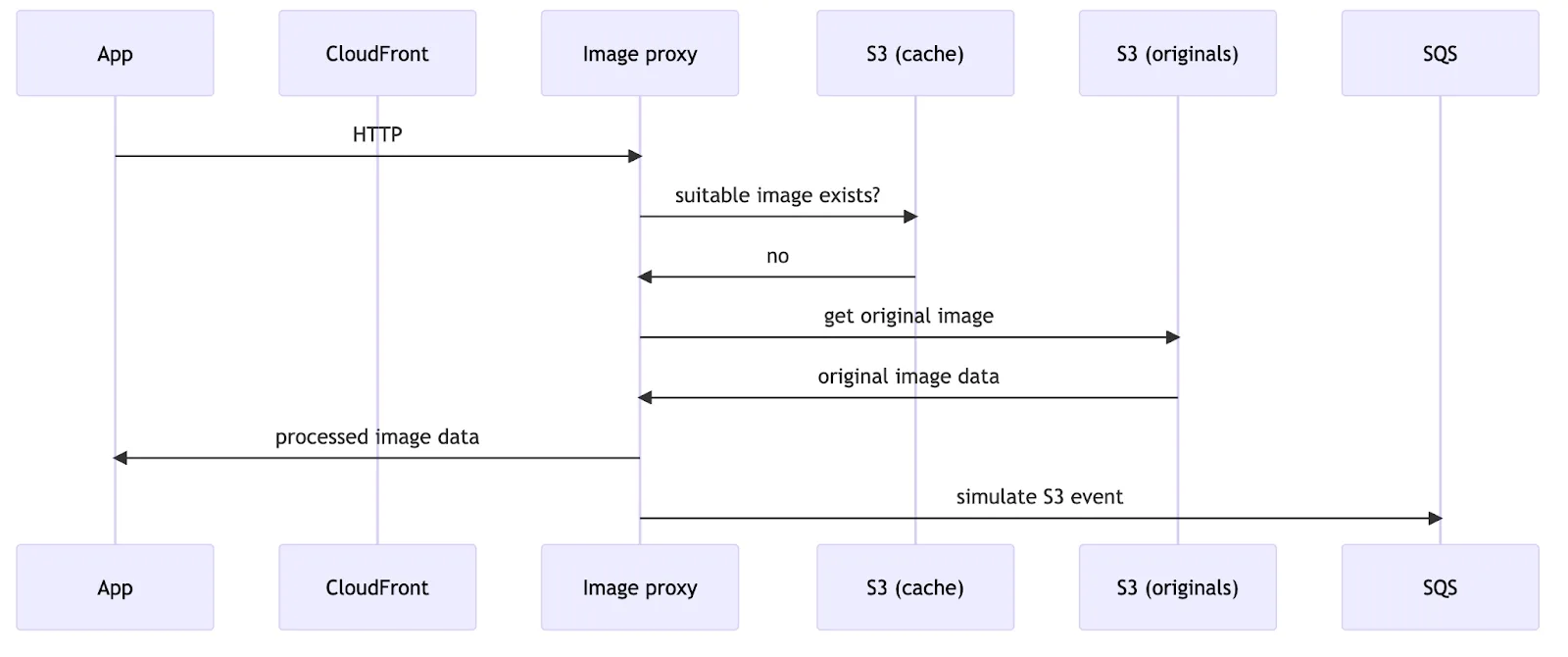

However, the S3 bucket used as a cache may not contain an image in the requested size for two reasons. First, many images were already present when the service was launched. Second, a recently added image might be requested before the batch processor manages to encode and cache it. In either case, the proxy resizes and encodes the original image as a JPEG image on demand. To ensure the batch processor eventually encodes the requested image in all formats and sizes, the proxy emits a simulated S3 event notification. JPEGs encoded on-demand by the proxy have a shorter Cache-Control max-age, allowing users to benefit from advanced formats faster.

Gradual Deployment of the Image-Resizer Service

After the challenges faced during the "lens" service deployment, where we misjudged the CloudFront cache hit rate and server capacity, leading to overload and a swift rollback, we took a more cautious approach with "image-resizer’s" deployment.

First, we tested on wolt.com, keeping mobile clients on the old CloudFront and "lens" setup. Focusing on the web client gave us an environment that was easy to control and revert if needed.

By the time of deployment, the batch-encoding service had been processing S3 event notifications for new images for a while. However, older images hadn't been addressed, leading us to expect a sudden spike in simulated S3 event notifications for these images. Using feature flags, we let a small part of web traffic use the new service. For these sessions, we modified the image URLs to point to "image-resizer". Watching server loads and the number of SQS messages in the batch processor’s queue, we slowly increased traffic to the new system.

Once satisfied with its stability, we configured the backend to send out "image-resizer" URLs by default, bringing the advanced image formats to mobile users.

Addressing Queue Surges with Event-Driven Autoscaling

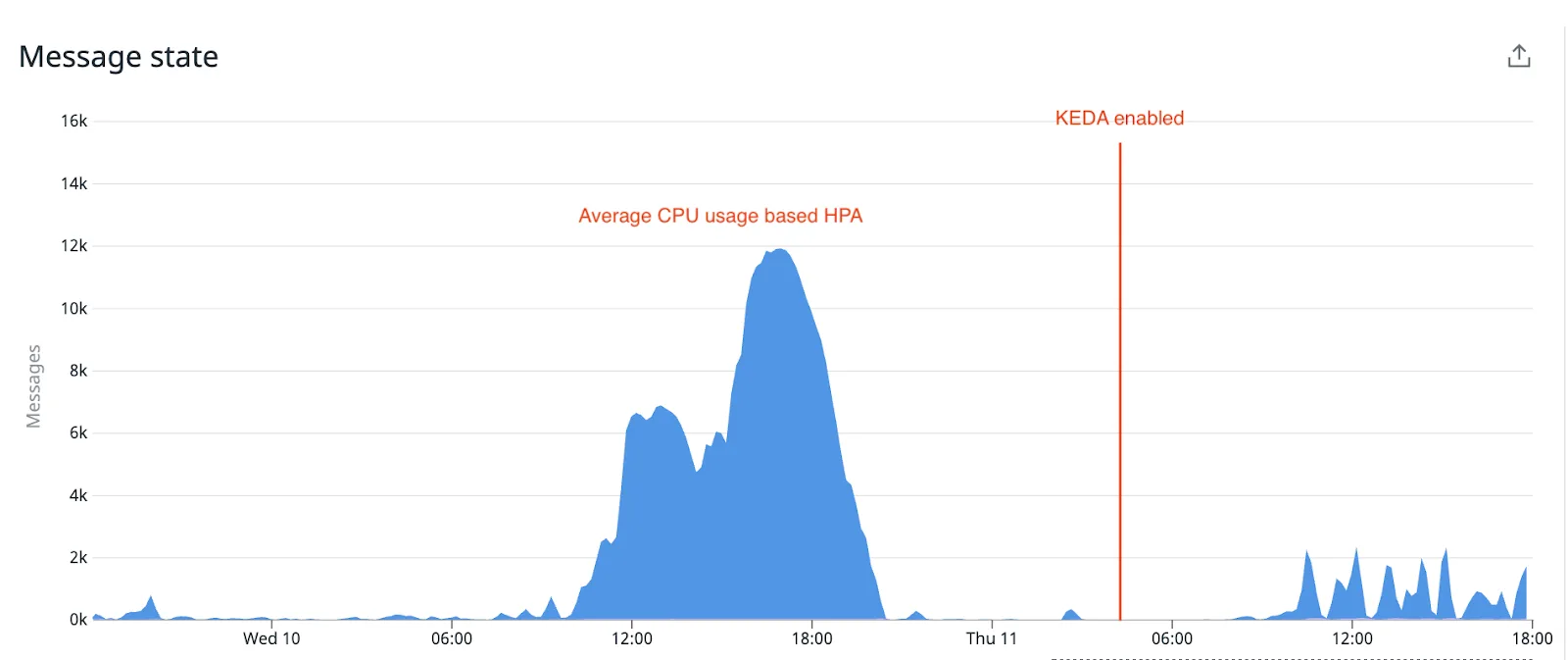

When a surge of new images led to an SQS queue buildup, we encountered difficulties scaling the batch processing service using the average-CPU-utilization-based HPA scaling rule. Typically, when each message corresponds to a unique image, the service is CPU-bound, as it's actively encoding images.

However, this dynamic shifted due to the potential for duplicate simulated S3 event notifications. The SQS queue backlog led to the proxy app serving an increasing number of client requests as on-demand encoded JPEGs with a short cache-control max-age setting. CloudFront increasingly resorted to our proxy app, which then generated additional simulated S3 event notifications, amplifying the likelihood of duplicates in the SQS queue.

In this scenario, the single-threaded nature of the batch processor became evident. It would sequentially fetch a message from SQS, verify the image's presence in its dedicated S3 bucket, and, after successful processing, delete the message. Each step introduced network latency, resulting in significant idle periods where the CPU remained underutilized. Although concurrently processing multiple messages could improve CPU use, it would also introduce some complexity and unpredictability in memory consumption, a trade-off we weren't willing to make then.

We turned to KEDA (Kubernetes Event-Driven Autoscaling) to address these challenges. Switching to KEDA allowed the service to scale based on the actual count of unprocessed SQS messages, ensuring a more responsive and efficient workload handling.

Adding Image Overlays for Regulations

In e-commerce, rules often change. Some countries started requiring warning labels on product images for safety reasons. As an additional complication, instead of layering two images on the viewer's side, they needed to be merged into one.

To comply with this new regulation, we used Sharp's image composition API to merge images. As overlay images had to be the same size or smaller than the main image, we resized them using the {fit:'inside'} setting. Our system could also handle adding multiple overlays when needed. We bypassed the batch processor for these tasks and served on-demand encoded JPEGs from the proxy app since we could not include the overlay image details in the S3 event notification format.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25export const overlayImages = async ( baseImageBuffer: Buffer, overlayImageBuffers: Array<Buffer>, { width, quality }: { quality: number; width: number }, ): Promise<Buffer> => { const resizedBaseImage = await sharp(baseImageBuffer).resize(width).toBuffer(); const img = sharp(resizedBaseImage); const metadata = await img.metadata(); const imgHeight = metadata.height ?? 0; const resizedOverlays = overlayImageBuffers.map((overlayImageBuffer) => sharp(overlayImageBuffer).resize(width, imgHeight, { fit: 'inside' }), ); return img .composite( await Promise.all( resizedOverlays.map(async (overlay) => ({ input: await overlay.toBuffer(), })), ), ) .toFormat('jpeg', { quality }) .toBuffer(); };

Yet, we faced a small hurdle after deployment. With an initial 24-hour max-age set for the Cache-Control response header, we found that an overlay image required an update. The cache's duration constrained us. Unable to pinpoint specific URLs for focused invalidation, and with a total CloudFront cache reset being infeasible, we could have changed the name of the overlay image in the query string parameter to bypass the cache. However, this would have required a change in the backend, so we decided to wait it out. Learning from this episode, we adjusted to a more cautious one-hour max-age for future deployments until the set of overlay images stabilized.

Serving Transparent Images for Promotions

Our image proxy was originally used for areas where transparency wasn't needed, like product images. However, when showcasing a promotional banner, we needed an image with a transparent background. Our proxy app initially supported three formats: JPEG, WebP, and AVIF. While AVIF and WebP can handle transparency and compress well, not all devices support them. Our fallback, JPEG, does not support transparency, prompting us to consider PNG, a format that offers transparency and is supported by most devices. However, the downside with PNGs is poor compression ratio.

As a quick fix to serve transparent images through our proxy service, we added a query string parameter to trigger streaming the original image, allowing access to PNGs.

Later, we enhanced the proxy app by introducing a feature that checks and caches an image's metadata using Sharp's Input metadata API. The proxy checks if the requested image has an alpha channel, allowing it to fall back to PNG instead of JPEG if WebP and AVIF are absent in the ‘Accept’ header. Because original images can be large, and we wanted to avoid loading them every time, we saved the much smaller metadata in the service’s S3 bucket to reduce network traffic.

Optimizing CPU Usage with Lazy Encoding

As Wolt's demands grew, our image batch processor emerged as the top CPU consumer. Upon examination, we found that only specific resolution and format combinations were requested for most images. Maintaining the older approach based on S3 event notifications seemed inefficient.

To address this, we shifted to 'lazy encoding.' Rather than pre-encoding every possible size and format, we encode only when a specific combination is requested. When receiving a request, the proxy service immediately encodes the image into JPEG and serves it. Simultaneously, it sends a task with the requested format and size details to the SQS queue. The batch processor eventually handles the task, ensuring the combination is available for future requests.

After rolling out this change, we saw a significant decrease in our CPU usage. Moreover, our storage requirements continued to grow at a slower pace.

Conclusions

Our adoption of modern image formats has enhanced both user experience and SEO. Furthermore, leveraging Sharp has made it straightforward to develop additional capabilities, including support for transparent images and overlays. Looking ahead, several avenues present potential improvements.

Utilizing AI to upscale images could allow us to offer crispier visuals even when original images lack high resolution. To take this route, we would likely deploy a separate service using additional libraries to servers with GPUs, ensuring more predictable workloads and allowing our other services to incorporate image upscaling if needed.

Individually calibrating each image codec’s quality settings for every image would yield further gains. With a binary search method, we could determine the optimal image codec parameters given a maximum quality degradation threshold, using metrics like DSSIM. With an adjustable increase in the overall CPU consumption depending on the search tree depth, we could deliver optimized image sizes without compromising quality.

Switching to parallel processing could enhance the CPU utilization of our single-threaded batch processor, resulting in more efficient server utilization. However, care is essential to avoid memory spikes when handling multiple large images simultaneously.

Addressing our S3 cache bucket's storage management is due in time. While standard S3 lifecycles might prune objects past a certain age, a more discerning method to track and maintain an active set is essential, clearing only inactive objects.

Ample possibilities in image processing encourage us to develop our capabilities further. We anticipate exciting findings ahead that will enrich the experience of using our applications.